AI causing some troubles at Spotify

Instead of eliminating work, AI is actually creating a lot more work for humans to do, just to undo its bad work.

One of the many issues that AI presents us with, as it rapidly proliferates and infiltrates our lives, is the thorny problem of copyright infringement.

Put incredibly simply, the machine learning kernel scraps vast amounts of existing data and repackages it without clear attribution, as something 'new'.

Labels like Universal Music Group (which controls about a third of the global music market) have demanded that sites like Spotify and Apple Music delete tracks that make unauthorised use of copyrighted material.

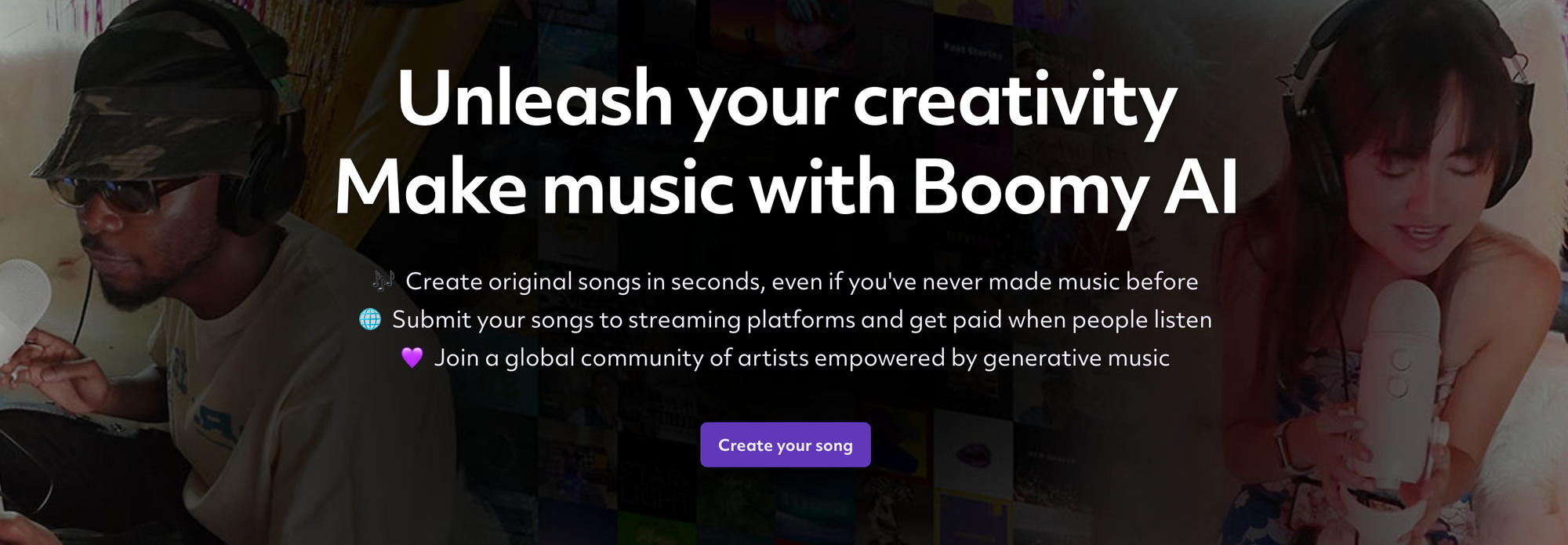

Boomy is an AI-platform that let's users create new music (from existing music catalogues) and then allows anyone to generate royalty fees from that music being streamed on sites like Spotify.

Although Boomy was only launched in 2021 it has already created (get this) more than 14 million tracks, making up more than 14% of recorded music; a staggering statistic.

Spotify are now taking action - deleting most AI-generated tracks coming from Boomy and trying to crack down on the artificial boosting of streams by bots, which is obviously fraud.

In short, it's all a bit of a mess really.

In a very short space of time so much nonsense has been created by AI and the process of cleaning it all up and putting safeguards in place to stop it from happening again has taken such a lot of effort, that one wonders if; instead of eliminating work, AI is actually creating a lot more work for humans to do, just to undo its work.

As always, this case study in AI causing chaos opens up some interesting questions:

What might this mean for the future of the music industry, sites like Spotify or the standards of content on the Internet in general?

Will AI, because is can scale to rapidly, literally turn the web into a garbage heap of bad content?

Will this spark new demand for human curation of content and paywalls that keep the AI-crap out?

It's not the the tool itself is without value, but rather that we are starting to better understand what its significant shortcomings are.

More: